Optimize a query on a Map

Comparing techniques for performance tuning a query on a Map class

In “Optimizing a query on a collection”, I considered how to optimize a query on an indexable collection. However, optimizing queries on Map classes turns out to be more complicated. In this article, I’ll provide an example of how you can speed up bottlenecks consisting of Map queries.

The query

First I’ll start with the problem. I’ll use strings as keys for my collection. For their corresponding values, I’ll simply use integer objects to indicate some value object. For the query, I’ll use a simple test that checks whether any particular string key includes one of a specified substrings set, and the query will simply return the summation of the integer values for those string keys, which include the substrings. For example, the Map might be:

- key -> value

- “code” -> 1

- “rode” -> 2

- “load” -> 3

- “toad” -> 4

- “road” -> 5

The query in that case might be “sum of the values for those string keys in the map that contain the substrings od or lo” (the answer would be 1+2+3=6 for this example list).

For my actual keys, I’ll generate multicharacter strings by using lowercase letters (a to z). For example, a collection of all four-character strings would generate a collection of 26 x 26 x 26 x 26 = 456976 four-character strings. The values will simply be an integer counter that increments by one as each string is added to the Map. I’ll query that Map for the summation of integer values for those strings that contain any of the substrings ie, xy, or pq. I’ve elected to use a Hashtable object to hold the collection for the start of the tests.

I’ve chosen to use an easily generated collection for the data and a straightforward query to avoid any application-specific distractions. I want to focus on the tuning here. The query represents many query types I’ve seen in applications, though a more representative test would return the value collection for the keys that satisfy the query. I’ve opted for the summation to avoid generating too many collections in my tests.

The simple straightforward version of the query is:

int count = 0;

Enumeration enumeration = map.keys();

String s;

while(enumeration.hasMoreElements())

{

s = (String) enumeration.nextElement();

if( ( s.indexOf("ie") != -1 )

|| ( s.indexOf("xy") != -1 )

|| ( s.indexOf("pq") != -1 ) )

{

count += ((Integer) this.get(s)).intValue();

}

}

return count;

For the tests, I’ll use the Sun virtual machines (VMs) from the Java SDK 1.2 and 1.3. In addition, I’ll test with the HotSpot VMs delivered with those two SDKs — HotSpot 1.0 and 2.0. I’ll also test one non-JIT VM, which in this case will be the 1.2 VM with the JIT turned off. You can easily turn off the JIT by setting the java.compiler property to NONE:

java "-Djava.compiler=NONE" ...

Avoid synchronization

As I said earlier, I started by using a Hashtable object to hold the map. In most applications, bottleneck queries tend to be read-only or single-threaded. In either case, you can normally use a nonsynchronized object to hold the collection. To do so here requires a simple change to using a HashMap object instead of the Hashtable object I initially used. In addition, I need to change the code to use an iterator, as HashMap does not support an enumerator. In fact, I really shouldn’t have used the enumerator in the original query since the Map interface does not support its use.

The query now reads as the following:

int count = 0;

Iterator iterator = map.keySet().iterator();

String s;

while(iterator.hasNext())

{

s = (String) iterator.next();

if( ( s.indexOf("ie") != -1 )

|| ( s.indexOf("xy") != -1 )

|| ( s.indexOf("pq") != -1 ) )

{

count += ((Integer) this.get(s)).intValue();

}

}

return count;

However, the test (test2 in Table 1 below) does not change the query time very much. A couple of the VMs even register slower times. That looks slightly odd to me and, looking back at what I’ve done, I noticed that I’ve been a little naughty. I made two changes to the code and tested those changes together without determining whether either change individually speeds up the query time. I changed the class and changed the enumerator to an iterator. I should really check each change separately.

My first concern is that the iterator is accessed via a Set of Map elements. I would have a real performance problem if the Set were a whole new collection with all the elements copied from Map. But the Set implementations of the JDK Map classes are actually alternate views of the underlying collection, and so they should not cause any adverse performance impact for this query.

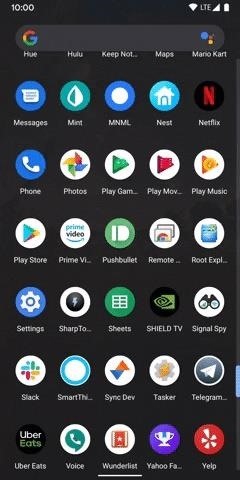

| Test | 1.2 JIT VM | HotSpot 1.0 2nd run* | 1.3 JIT VM | HotSpot 2.0 2nd run* | 1.2 non-JIT | Test details |

|---|---|---|---|---|---|---|

| test1 | 100% | 129% | 134% | 125% | 985% | Hashtable with enumerator |

| test2 | 98.1% | 129% | 135% | 124% | 1104% | HashMap with iterator |

| test3 | 108% | 131% | 138% | 125% | 1187% | Hashtable with iterator |

| test4 | 91.5% | 125% | 124% | 118% | 1001% | replaced .hasNext() call in test2 |

| test5 | 120% | 131% | 134% | 126% | 1009% | use Map.Entry Set view |

| test6 | 83.0% | 107% | 102% | 99.1% | 727% | query in Map class |

| test7 | 78.1% | 103% | 105% | 102% | 720% | specialized Map class of String keys and int values |

| test8 | 77.7% | 73.3% | 104% | 69.7% | 697% | specialized Map class of char[] keys and int values |

| test9 | 19.4% | 18.7% | 22% | 18.5% | 111% | specialized Map class of four-char keys and int values |

| test10 | 8.7% | 12.0% | 11.4% | 10% | 82.3% | perfect minimum hashing on four-char keys and int values |

NOTE: HotSpot VMs run methods in interpreted mode until the HotSpot VM profiler decides that the method is better run natively compiled. The method is then natively compiled with extensive optimizations applied to it. For the test runs here, some methods were optimized by the second test run, but not until the third test run were the HotSpot optimizations fully applied to the test by the VM. Consequently, I show the results of the first and third test runs in the HotSpot VMs.

The process of testing the two changes individually is straightforward: simply use a Hashtable with the iterator. The test result (test3 in Table 1) is very illuminating. Test3 clearly shows that enumerators are faster than iterators for the Maps I’ve reviewed here, and that is probably true for other JDK classes. The slower iterators combined with the faster nonsynchronized HashMap class balanced each other out to give test2’s mixed results. Unfortunately, we don’t have the option of using the faster nonsynchronized HashMap together with the faster enumerators.

Eliminate the repeated Iterator.hasNext()

Let’s move on with performance tuning. The next obvious optimization is to eliminate the repeated Iterator.hasNext() call in the for loop test. You already know how many elements there are, so you don’t need to ask if there are more.

This is a simple change. You can replace:

while(iterator.hasNext())

with:

for (int size = map.size(); size > 0; size--)

The test results are in test4 of Table 1. I’ve stuck with the HashMap and the iterator for test4, so test2 is the previous directly comparable test. The results show that the optimization is unequivocally better for all VMs. But my overall tuning effort so far shows only a small improvement.

Avoid the repeated Map.get() call

As with the last optimization, you can eliminate another method call, the Map.get() call. That call is used to get the value for the keys that satisfy your query.

You can eliminate the Map.get() call because Map classes support a set view that contains key-value pairs, obtained from the Map.entrySet() method. The elements of that set are java.util.Map.Entry objects, so you can access the value objects directly after you query the keys. The query code now looks like this:

int count = 0;

Iterator iterator = this.entrySet().iterator();

String s;

Map.Entry entry;

for (int size = this.size(); size > 0; size--)

{

entry = (Map.Entry) iterator.next();

s = (String) entry.getKey();

if( ( s.indexOf("ie") != -1 )

|| ( s.indexOf("xy") != -1 )

|| ( s.indexOf("pq") != -1 ) )

{

count += ((Integer) entry.getValue()).intValue();

}

}

return count;

Unfortunately, the test results (test5 in Table 1) show that change to be unambiguously bad for performance. Although the idea of replacing the Map.get() call with a quicker data member access is nominally good, you unfortunately have to replace it with two method accesses and an extra cast that swamp any advantages you may have gained. That optimization should be immediately discarded.

Avoid the method accessors

A standard optimization for queries on collections is to reimplement the query in the collection class so that you can avoid having to repeatedly access elements through accessor methods. Unfortunately, JDK Map classes that define their internal elements as protected do not exist, so you cannot just subclass an existing Map and add the query. Instead, you need to create your own Map class. But that is not a large problem since many examples of hash map classes with source code are available (from the JDK and the Web).

To define the query, you need to know how a hash map holds its elements internally. I’ll use a standard implementation similar to HashMap from the JDK for my class. This element storage algorithm is not too difficult:

- Obtain an index from the key. The easiest way to do this is to use the key object’s hashcode and modulo it by the size of the internal collection.

- Use the index to insert the element into the internal collection. Since the index could be the same for multiple objects, that location in the internal table is actually a node of a linked list, and the key and corresponding value is added as the last node of the list.

So the internal structure is an array of linked list nodes, with each node holding a key and value. Now you can define your query within the class:

//hold the internal table in a local for slightly faster access

//Test6MapEntry is the name of my linked list node class

Test6MapEntry tab[] = table;

int counter = 0;

String s;

//Run through the elements of the internal array

for (int i = tab.length ; i-- > 0 ;)

{

//The current element could be null or a node. If it is a node

//you have at least one entry at this index. There could be several

//entries, and you need to iterate through all the nodes in the

//list at this index.

for (Test6MapEntry e = tab[i] ; e != null ; e = e.next)

{

//the key is Test6MapEntry.key

s = (String) e.key;

if( ( s.indexOf("ie") != -1 )

|| ( s.indexOf("xy") != -1 )

|| ( s.indexOf("pq") != -1 ) )

{

//the value is Test6MapEntry.value

counter += ((Integer) e.value).intValue();

}

}

}

return counter;

The results of the test are shown in test6 of Table 1. All the VMs show that test6 is clearly the fastest test. Implementing the query in the collection class is usually an optimization to aim for with any collection query.

Specialize your custom Map class

Now that you have your own Map implementation, you can specialize the implementation for your query. The most obvious change you can make is to move from general Object key value pairs to data types more appropriate to your problem.

In the example here, you can specialize to String keys and int values. However, that requires quite a few changes to your Map class. You can maintain backward compatibility with the Map interface if necessary by wrapping specialized methods with generalized ones.

public Object get(Object key)

{

return new Integer(get((String) key));

}

public int get(String key) {....// the real get method

The query now has the advantage of eliminating a couple of casts and a method call:

Test7MapEntry tab[] = table;

int counter = 0;

String s;

for (int i = tab.length ; i-- > 0 ;)

{

for (Test7MapEntry e = tab[i] ; e != null ; e = e.next)

{

s = e.key;

if( ( s.indexOf("ie") != -1 )

|| ( s.indexOf("xy") != -1 )

|| ( s.indexOf("pq") != -1 ) )

{

counter += e.value;

}

}

}

return counter;

The results of the test are shown in test7 of Table 1. The HotSpot 1.3 VMs do not gain any advantage from the optimization. That may reflect the aggressive speculative optimizations that HotSpot can apply, which could have resulted in HotSpot already avoiding the casts in test6.

You can also specialize the class further to holding char[] array keys, which further improves performance times (test8 of Table 1). That optimization requires extra work since you need to implement the String methods, hashCode(), and includes() for your char[] arrays.

Further nonstandard optimizations

The previous sections showed fairly standard optimizations that you can apply to most queries on Maps. There are further nonstandard optimizations that you can apply, which are highly dependent on the nature of the map’s data. I’ll continue the tuning discussion to show two optimizations of that type.

Optimize for data

You can start by considering the data you are holding. You’ve used four char strings for keys. In the last optimization, you specialized the custom Map class to hold char[] arrays. You can take that one step further and build your whole Map class around the knowledge that you have four char keys. The map node class becomes a node holding four chars and an int value:

class Test9MapEntry

{

int hash;

char keyc1;

char keyc2;

char keyc3;

char keyc4;

int value;

Test9MapEntry next;

...

}

The query is radically changed to optimize for only four chars:

Test9MapEntry tab[] = table;

int counter = 0;

String s;

for (int i = tab.length ; i-- > 0 ;)

{

char c1, c2, c3, c4;

for (Test9MapEntry e = tab[i] ; e != null ; e = e.next)

{

c2 = e.keyc2;

c3 = e.keyc3;

c4 = e.keyc4;

if( ( (c1 = e.keyc1) == 'i' && c2 == 'e')

|| (c2 == 'i' && c3 == 'e')

|| (c3 == 'i' && c4 == 'e')

|| (c1 == 'x' && c2 == 'y')

|| (c2 == 'x' && c3 == 'y')

|| (c3 == 'x' && c4 == 'y')

|| (c1 == 'p' && c2 == 'q')

|| (c2 == 'p' && c3 == 'q')

|| (c3 == 'p' && c4 == 'q') )

{

counter += e.value;

}

}

}

return counter;

The advantage of all that effort is that you can dramatically improve your query times (see test9 in Table 1). Compared to the first query, the query in test9 runs five times faster. That tuning technique generally applies when you can specialize the Map class for the data that is being held in the map.

Perfect hashing

The technique for mapping data into a table is called hashing. All the Map class implementations so far have used the same hash function — the function from the JDK HashMap class. That looks like:

int index = (key.hashCode() & 0x7FFFFFFF) % table.length;

The function extracts some bits from the key object’s hashcode, and then uses the modulo of the table length to ensure that the index fits into the table.

Since you have your own implementation, you can see if your data would map more efficiently with a different hashing function. Preferably you want a perfect hash function that maps every element to a different index in the table. That means you wouldn’t have to use the extra linked list nodes for each entry. Ideally, you could get a hashing function that maps the keys into a table where every key occupies exactly one index without any empty index locations. That last type of hashing is called minimal perfect hashing.

In general, there are techniques for determining perfect hash functions (see “Minimal Perfect Hashing”, for example). For that particular example data, however, you can immediately identify a minimal perfect hash function:

int index = 26*26*26*(char1-'a') + 26*26*(char2-'a') + 26*(char3-'a') + (char4-'a');

Constructing a Map class for a minimal perfect hash function is extremely simple. The internal structure is a simple set of arrays since every key slots directly to one unique index. The implementation is more similar to an indexable collection than the usual hash map implementations. Essentially, the structure is an indexable collection with a mapping function to map elements to index entries. Nodes are not required, and the keys and values can use separate arrays. For my implementation, I used four char[] arrays (one for each character of the four char keys) and one int[] array for the values. The resulting query code is:

int counter = 0;

for (int i = value.length ; i-- > 0 ;)

{

char c1, c2, c3, c4;

c2 = char2[i];

c3 = char3[i];

c4 = char4[i];

if( ( (c1 = char1[i]) == 'i' && c2 == 'e')

|| (c2 == 'i' && c3 == 'e')

|| (c3 == 'i' && c4 == 'e')

|| (c1 == 'x' && c2 == 'y')

|| (c2 == 'x' && c3 == 'y')

|| (c3 == 'x' && c4 == 'y')

|| (c1 == 'p' && c2 == 'q')

|| (c2 == 'p' && c3 == 'q')

|| (c3 == 'p' && c4 == 'q') )

{

counter += value[i];

}

}

return counter;

The results of testing the query are in test10 of Table 1. The last version of the query runs more than 10 times faster than the original query. The tuning technique is suitable where data can be completely categorized so that you can identify a perfect hashing function.

Results

The tuning exercise showed that you can gain a significant speed increase in Map querying. The biggest speed improvements come from specializing the query class to be optimized for the data that is being held.

Map construction tuning

At the same time, as I tuned the query in the example shown in this article, I was tuning the maps’ construction. The optimizations are shown in the source code for the example, across the 10 classes used for the tests presented. In general, the optimizations used to speed up the queries have been complementary to those needed to speed up the map construction, so the map construction has similarly improved in speed.